Military, Biden-Era Doubts, and the Realities of AI on the Battlefield

A short, clear take: a former Biden official warns about AI risks, a defense contractor says the military is moving forward, and the debate reveals who actually understands this tech. I will explain the claims, show how industry is responding, and point out the political context. Expect blunt assessment and clear lines drawn between caution and capability.

Mieke Eoyang, who served as deputy assistant secretary of defense for cyber policy in the Biden administration, warned that current AI models are a poor fit for military use and could be dangerous. Her concern is straightforward: military adoption of public AI agents could give chatbots leeway to suggest violence or other prohibited behavior. That fear has made headlines and stirred anxiety inside and outside the Pentagon.

‘There are any number of things that you might be worried about.’ That line has been quoted a lot because it captures the broader anxiety about leaks, misuse, and unexpected consequences. The point is legitimate: handing powerful tools to a huge organization creates risk without the right safeguards.

“A lot of the conversations around AI guardrails have been, how do we ensure that the Pentagon’s use of AI does not result in overkill? There are concerns about ‘swarms of AI killer robots,’ and those worries are about the ways the military protects us,” she told Politico. “But there are also concerns about the Pentagon’s use of AI that are about the protection of the Pentagon itself. Because in an organization as large as the military, there are going to be some people who engage in prohibited behavior. When an individual inside the system engages in that prohibited behavior, the consequences can be quite severe, and I’m not even talking about things that involve weapons, but things that might involve leaks.”

Those quotes underline two truths: the technology can be misused, and big institutions need rigorous controls. A Republican view here is simple—support our troops and secure our tools, but don’t let fear from political appointees freeze progress. The real failure would be to let alarmism hand advantages to strategic rivals.

GREG BAKER/AFP via Getty Images

On the other side of the aisle, Tyler Saltsman of EdgeRunner launched a blunt rebuttal: the Department of War is not cowering. He ran tests with an offline chatbot during exercises in Colorado and Kansas and came away impressed with what secure, offline models can do for battlefield information flow. The contrast could not be clearer: one voice warns of catastrophe, another shows disciplined capability in action.

“The Department of War is trying to fortify what their AI strategy looks like; they’re not afraid of it,” Saltsman told Blaze News in response to Eoyang’s claims. He argued that an offline AI, designed for service members without feeding data back to public clouds, solves many of the problems critics point to. That approach reduces the attack surface and keeps operational secrets where they belong.

“It’s concerning that folks who are clueless on technology were put in such highly influential positions.” Saltsman’s insult lands hard because this is a technical fight as much as a policy fight, and experience matters. Conservatives who believe in a strong, capable military see these comments as a call to replace cautionary headlines with real expertise.

Eoyang also worried about malicious actors getting access to military AI and about information loss. “There are any number of things that you might be worried about. There’s information loss; there’s compromise that could lead to other, more serious consequences,” she said, and that is not something to dismiss lightly. Every tool the Pentagon adopts must be hardened against both internal misuse and external attack.

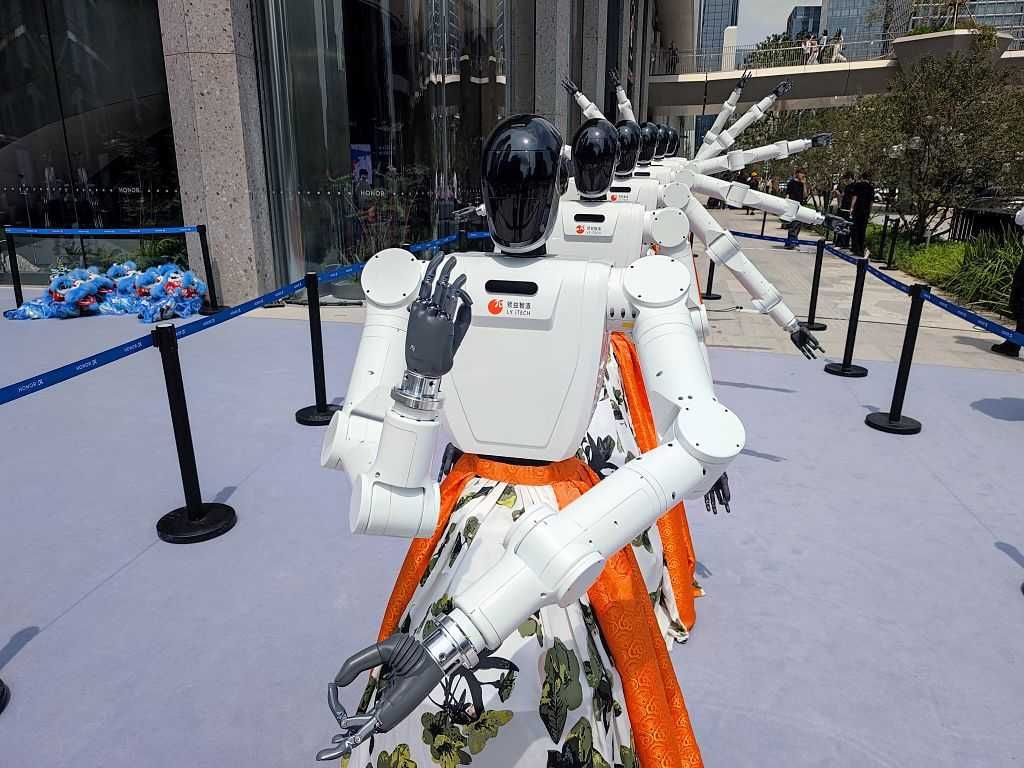

Photo by VCG/VCG via Getty Images

But Saltsman addressed that head on by keeping his system offline and urging vendors to offer paid offline alternatives. He bluntly summed up the problem with commercial AI providers: “They want your data, they want your prompts, they want to learn more about you.” That suspicion of data-harvesting is common sense for anyone who values operational security.

“They want to spy on you,” he added, and that wording resonates with patriots who see statecraft as a battle over information as much as firepower. For Republicans, the argument is both practical and moral: protect secrets, protect soldiers, and don’t hand tactical advantage to enemies through carelessness. The military must adopt tech without surrendering control of sensitive data.

Saltsman also announced a partnership with Meta to share the technology with allies, emphasizing collaboration across government, industry, and academia. “It’s important for the government to partner with industry and academia and have joint-force operations in this field,” he told Blaze News. That kind of public-private partnership is exactly the model conservatives have pushed for: leverage American innovation while keeping command and control firmly in U.S. hands.

“I’m thankful for Secretary of War Pete Hegseth and all he is doing to reshape the DOW and help it become more effective.” That closing line signals a political alignment: a defense posture that trusts the field commanders and proven private-sector partners, and that rejects the paralysis of bureaucratic fear. The debate is not about romance with tech; it is about competence and national security.

The takeaway is simple and urgent: fear-filled headlines from former officials should not stop practical, secure adoption of AI that helps troops. Republicans should demand both strong safeguards and brisk modernization, because falling behind adversaries is not an option. We can and must do both—secure the data and give our forces the tools they need to win.